Investigating the Utility of Explainable Artificial Intelligence for Neuroimaging-Based Dementia Diagnosis and Prognosis

Image from publication.

Image from publication.Abstract

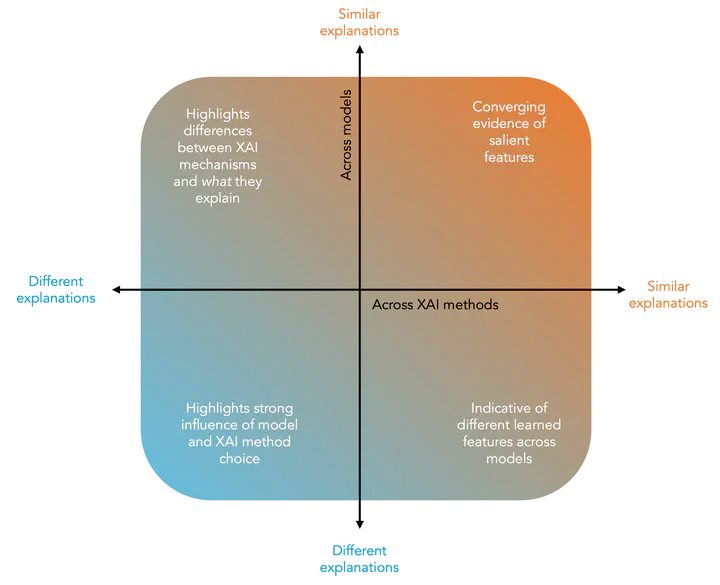

Artificial intelligence and neuroimaging enable accurate dementia prediction but often involve ‘black box’ models that can be difficult to trust. Explainable artificial intelligence (XAI) aims to provide insights into the model’s decisions; however, choosing the most appropriate method is non-trivial and often context-specific. We used T1-weighted MRI to train models on two tasks: Alzheimer’s disease (AD) classification (diagnosis) and predicting conversion from mild-cognitive impairment (MCI) to all-cause dementia (prognosis). We applied eleven XAI methods across two popular image classification architectures, producing visualisations of the most salient regions. We also propose a framework for interpreting explanations produced by different XAI methods and predictive models. Models achieved balanced accuracies of 81% and 67% for diagnosis and prognosis. XAI outputs highlighted brain regions relevant to AD with strong convergence across gradient-based techniques. LIME produced explanations that were most similar across architectures. Mean saliency enhanced MCI prognosis prediction when included as an additional input feature. XAI can be used to verify that models are utilising relevant features and to generate valuable measures for further analysis.